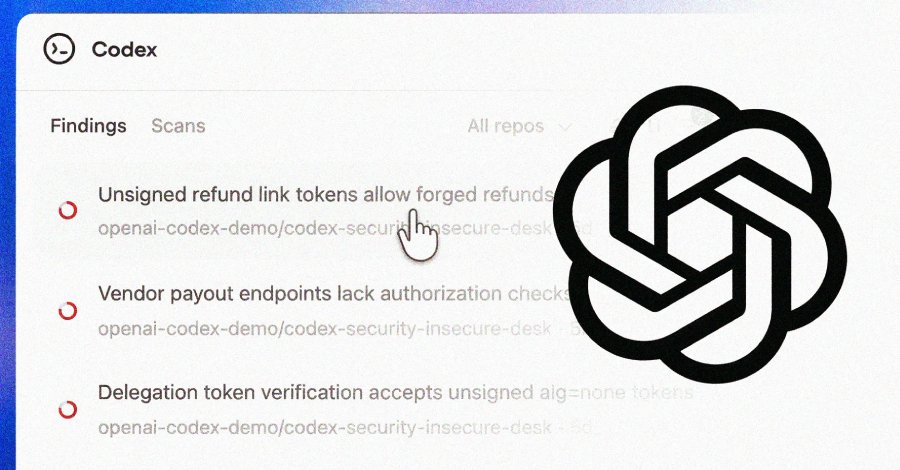

OpenAI on Friday started rolling out Codex Safety, a synthetic intelligence (AI)-powered safety agent that is designed to search out, validate, and suggest fixes for vulnerabilities.

The characteristic is accessible in a analysis preview to ChatGPT Professional, Enterprise, Enterprise, and Edu prospects through the Codex internet with free utilization for the following month.

“It builds deep context about your venture to determine complicated vulnerabilities that different agentic instruments miss, surfacing higher-confidence findings with fixes that meaningfully enhance the safety of your system whereas sparing you from the noise of insignificant bugs,” the corporate mentioned.

Codex Safety represents an evolution of Aardvark, which OpenAI unveiled in personal beta in October 2025 as a method for builders and safety groups to detect and repair safety vulnerabilities at scale.

Over the past 30 days, Codex Safety has scanned greater than 1.2 million commits throughout exterior repositories over the course of the beta, figuring out 792 crucial findings and 10,561 high-severity findings. These embrace vulnerabilities in numerous open-source initiatives like OpenSSH, GnuTLS, GOGS, Thorium, libssh, PHP, and Chromium, amongst others. A few of them have been listed under –

- GnuPG – CVE-2026-24881, CVE-2026-24882

- GnuTLS – CVE-2025-32988, CVE-2025-32989

- GOGS – CVE-2025-64175, CVE-2026-25242

- Thorium – CVE-2025-35430, CVE-2025-35431, CVE-2025-35432, CVE-2025-35433, CVE-2025-35434, CVE-2025-35435, CVE-2025-35436

In line with the AI firm, the newest iteration of the appliance safety agent leverages the reasoning capabilities of its frontier fashions and combines them with automated validation to attenuate the chance of false positives and ship actionable fixes.

OpenAI’s scans on the identical repositories over time have demonstrated growing precision and declining false optimistic charges, with the latter falling by greater than 50% throughout all repositories.

In a press release shared with The Hacker Information, OpenAI mentioned Codex Safety is designed to enhance signal-to-noise by grounding vulnerability discovery in system context and validating findings earlier than surfacing them to customers.

Particularly, the agent works in three steps: it analyzes a repository to get a deal with on the venture’s security-relevant construction of the system and generates an editable menace mannequin that captures what it does and the place it is most uncovered.

As soon as the system context is constructed, Codex Safety makes use of it as a basis to determine vulnerabilities and classifies findings based mostly on their real-world affect. The flagged points are pressure-tested in a sandboxed setting to validate them.

“When Codex Safety is configured with an setting tailor-made to your venture, it could validate potential points immediately within the context of the operating system,” OpenAI mentioned. “That deeper validation can cut back false positives even additional and allow the creation of working proofs-of-concept, giving safety groups stronger proof and a clearer path to remediation.”

The ultimate stage includes the agent proposing fixes that finest align with the system conduct in order to cut back regressions and make them simpler to evaluation and deploy.

Information of Codex Safety comes weeks after Anthropic launched Claude Code Safety to assist customers scan a software program codebase for vulnerabilities and recommend patches.