In as we speak’s hospitals and clinics, a dermatologist could use a synthetic intelligence mannequin for classifying pores and skin lesions to evaluate if the lesion is susceptible to growing right into a most cancers or whether it is benign. But when the mannequin is biased towards sure pores and skin tones, it might fail to determine a high-risk affected person.

Maybe top-of-the-line recognized and most persistent challenges that AI analysis continues to reckon with is bias. Bias is commonly mentioned in relation to coaching information, however mannequin structure may also comprise and amplify bias, negatively influencing mannequin efficiency in real-world settings. In high-stakes medical eventualities, the very actual penalties of poor efficiency have made bias right into a quintessential security subject.

A brand new paper from researchers at MIT, Worcester Polytechnic Institute, and Google that was accepted to the 2026 Worldwide Convention for Studying Representations proposes a novel debiasing method known as “Weighted Rotational DebiasING” (i.e., WRING) that may be utilized to imaginative and prescient language fashions (VLMs), like OpenAI’s OpenCLIP.

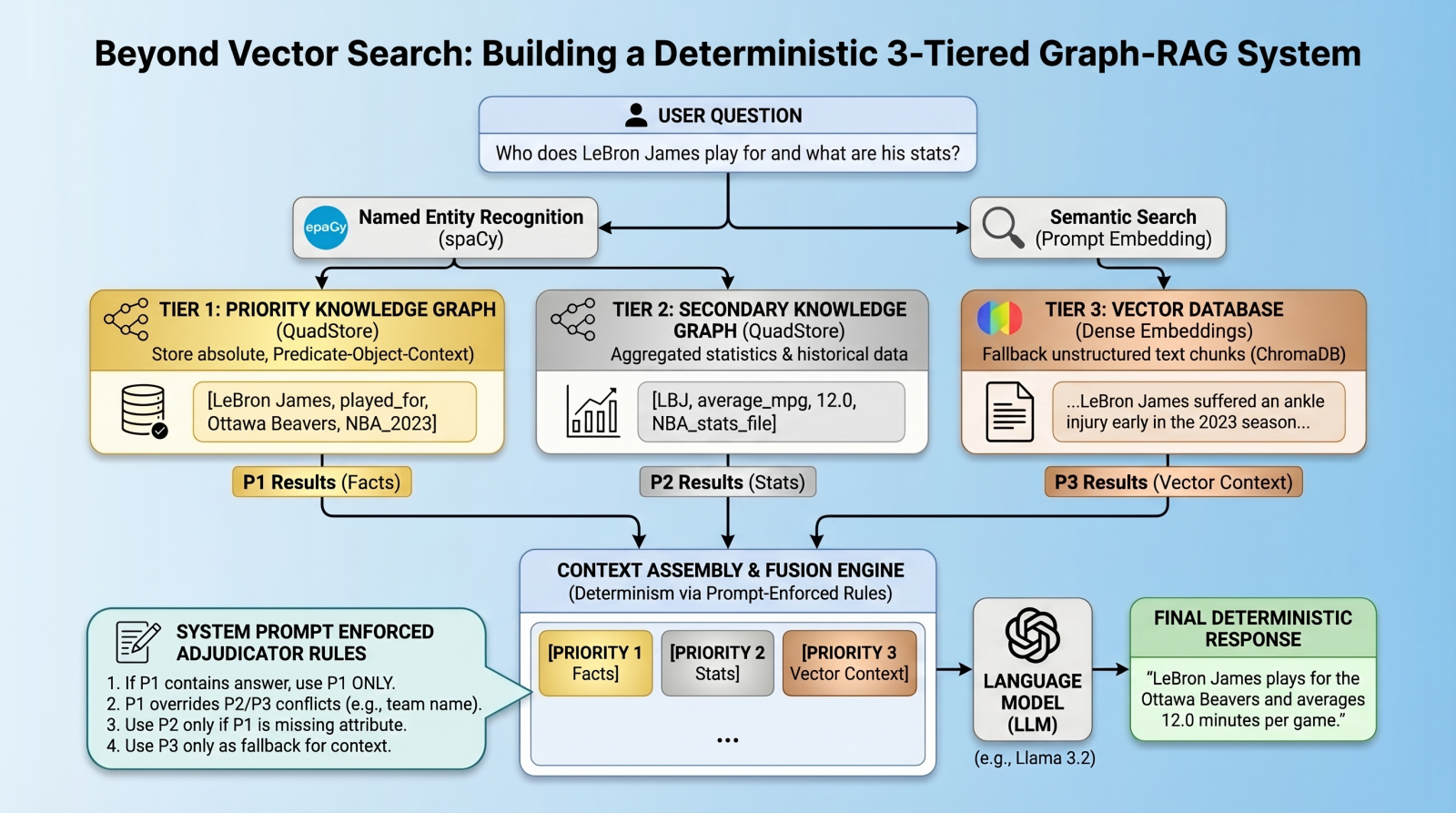

VLMs are multi-modal fashions that may perceive and interpret totally different information modalities like video, picture, and textual content concurrently. Whereas debiasing approaches for VLMs do exist, essentially the most generally used method is named “projection debiasing,” which results in what has been termed the “Whac-A-Mole dilemma”, an empirical remark that was formally launched to AI analysis in 2023.

Projection debiasing is a post-processing method that removes the undesirable, biased data from mannequin embeddings by “projecting” the subspace out of a illustration house of relationships, thereby chopping out the bias. However this method has its drawbacks.

“If you try this, you inadvertently squish every part round,” says Walter Gerych, the paper’s first creator, who performed this analysis final yr as a postdoc at MIT. “All the opposite relationships that the mannequin learns change while you try this.”

Gerych, who’s now an assistant professor of pc science at Worcester Polytechnic Institute, is joined on the paper by MIT graduate college students Cassandra Mother or father and Quinn Perian; Google’s Rafiya Javed; and MIT affiliate professors {of electrical} engineering Justin Solomon and Marzyeh Ghassemi, who’s an affiliate of the Abdul Latif Jameel Clinic for Machine Studying and Well being and the Laboratory for Info and Resolution Techniques.

Whereas projection debiasing stops the mannequin from appearing upon the bias that’s been projected out of the subspace, it could find yourself amplifying and creating different biases, therefore the Whac-A-Mole dilemma. In keeping with Ghassemi, the unintended amplification of mannequin biases is “each a technical and sensible problem. As an illustration, when debiasing a VLM that retrieves photos of scientific employees — if racial bias is eliminated — it might have the unintended consequence of amplifying gender bias.”

WRING works by transferring sure coordinates throughout the high-dimensional house of a mannequin — those that seem like answerable for bias — to a unique angle, so the mannequin can not distinguish between totally different teams inside a sure idea. This modifications the illustration inside a particular house whereas leaving the mannequin’s different relationships intact. And like projection debiasing, WRING is a post-processing method, which implies it may be utilized “on the fly” to a pre-trained VLM.

“Folks already spent loads of sources, some huge cash, coaching these big fashions, and we don’t actually wish to go in and modify one thing throughout coaching as a result of then it’s a must to begin from scratch,” Gerych explains. “[WRING is] very environment friendly. It doesn’t require extra coaching of the mannequin and it’s minimally invasive.”

Of their outcomes, the researchers discovered that WRING considerably decreased bias for a goal idea with out rising bias in different areas. However for now, the method is considerably restricted to Contrastive Language-Picture Pre-training (CLIP) fashions, a kind of VLM that connects photos to language for search or classification.

“Extending this for ChatGPT-style, generative language fashions, is the affordable subsequent step for us,” says Gerych.

This work was supported, partly, by a Nationwide Science Basis CAREER Award, AI2050 Award Early Profession Fellowship, Sloan Analysis Fellow Award, the Gordon and Betty Moore Basis Award, and MIT-Google Computing Innovation Award.