Does your chatbot know an excessive amount of? This is why you need to assume twice earlier than you inform your AI companion every thing.

17 Nov 2025

•

,

4 min. learn

Within the film “Her” the movie’s hero strikes up an finally doomed romantic relationship with a complicated AI system. On the time of its launch in 2013, such a state of affairs was firmly within the realms of science fiction. However with the emergence of generative AI (GenAI) and huge language fashions (LLMs), it’s now not such an outlandish prospect. In actual fact, “companion” apps are proliferating immediately.

Nonetheless, inevitably there are dangers related to hooking up with an AI bot. How have you learnt your private data gained’t be shared with third events? Or stolen by hackers? The solutions to questions like these will aid you decide whether or not it’s all well worth the danger.

In search of (digital) love

Companion apps meet a rising market demand. AI girlfriends and boyfriends harness the facility of LLMs and pure language processing (NLP) to work together with their customers in a conversational, extremely personalised means. Titles like Character.AI, Nomi and Replika fill a psychological and generally romantic want for individuals who use them. It’s not exhausting to see why builders are eager to enter this area.

Even the large platforms are catching up. OpenAI lately stated it is going to quickly roll out “erotica for verified adults,” and will permit builders to create “mature” apps constructed on ChatGPT. Elon Musk’s xAI has additionally launched flirtatious AI companions in its Grok app.

Analysis revealed in July discovered that almost three-quarters of teenagers have used AI companions, and half accomplish that usually. Extra worryingly, a 3rd have chosen AI bots over people for critical conversations, and 1 / 4 have shared private data with them.

That’s significantly regarding as cautionary tales start to emerge. In October, researchers warned that two AI companion apps (Chattee Chat and GiMe Chat) had unwittingly uncovered extremely delicate consumer data. A misconfigured Kafka dealer occasion left the streaming and content material supply programs for these apps with no entry controls. That meant anybody might have accessed over 600,000 user-submitted photographs, IP addresses, and thousands and thousands of intimate conversations belonging to over 400,000 customers.

The dangers of hooking up with a bot

Opportunistic risk actors might sense a brand new solution to earn a living. The knowledge shared by victims in romantic conversations with their AI companion is ripe for blackmail. Photos, movies and audio may very well be fed into deepfake instruments to be used in sextortion scams, for instance. Or private data may very well be bought on the darkish internet to be used in follow-on id fraud. Relying on the safety posture of the app, hackers can also be capable of pay money for bank card data saved for in-app purchases. In line with Cybernews, some customers spend 1000’s of {dollars} on such purchases.

As per the above instance, income technology fairly than cybersecurity is the precedence for AI app builders. Which means risk actors might be able to discover vulnerabilities or misconfigurations to take advantage of. They may even strive their hand at creating their very own lookalike companion apps which disguise malicious information-stealing code, or manipulate customers into divulging delicate particulars which can be utilized for fraud or blackmail.

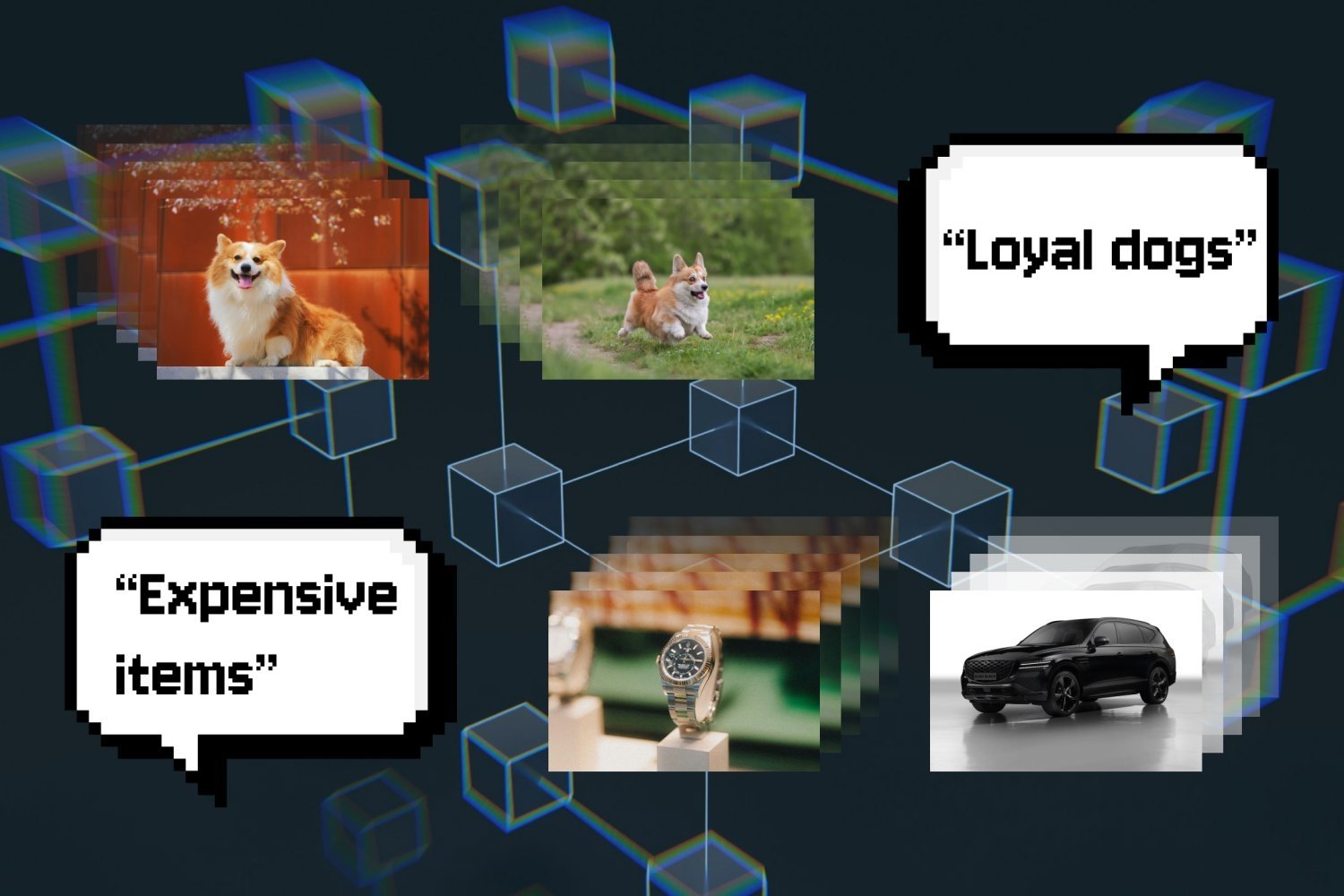

Even when your app is comparatively safe, it could be a privateness danger. Some builders accumulate as a lot data on their customers as doable to allow them to promote it on to third-party advertisers. Opaque privateness insurance policies might make it obscure if, or how, your knowledge is protected. You may additionally discover that the knowledge and conversations you share along with your companion are used to coach or fine-tune the underlying LLM, which additional exacerbates privateness and safety dangers.

Methods to preserve your loved ones secure

Whether or not you’re utilizing an AI companion app your self or are involved about your kids doing so, the recommendation is similar. Assume the AI has no safety or privateness guardrails in-built. And don’t share any private or monetary data with it that you just wouldn’t be snug sharing with a stranger. This contains doubtlessly embarrassing or revealing photographs/movies.

Even higher, in case you or your children wish to check out one among these apps, do you analysis forward of time to seek out those that supply one of the best safety and privateness protections. That may imply studying the privateness insurance policies to know how they use and/or share your knowledge. Keep away from any that aren’t specific about supposed utilization, or which admit to promoting consumer knowledge.

When you’ve discovered your app, you’ll want to change on security measures like two-factor authentication. This may assist forestall account takeovers utilizing stolen or brute-forced passwords. And discover its privateness settings to dial up protections. For instance, there could also be an choice to choose out of getting your conversations saved for mannequin coaching.

Should you’re anxious concerning the safety, privateness and psychological implications of your children utilizing these instruments, begin a dialog with them to seek out out extra. Remind them of the dangers of oversharing, and emphasize that these apps are a instrument for revenue which don’t have their customers’ greatest pursuits at coronary heart. Should you’re involved concerning the influence they could be having in your kids, it could be crucial to place limits on display time and utilization – doubtlessly enforced through parental monitoring controls/apps.

It goes with out saying that you just shouldn’t permit any AI companion apps whose age verification and content material moderation insurance policies don’t provide adequate protections on your kids.

It stays to be seen whether or not regulators will step in to implement stricter guidelines round what builders can and may’t do on this realm. Romance bots function in one thing of a gray space at current, though an upcoming Digital Equity Act within the EU might prohibit excessively addictive and personalised experiences.

Till builders and regulators catch up, it could be higher to not deal with AI companions as confidants or emotional crutches.